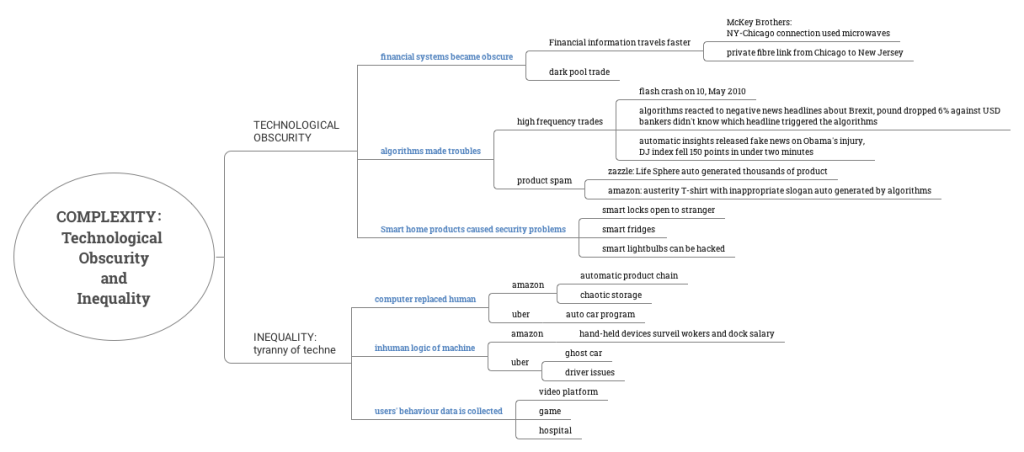

Chapter 5 Complexity mainly talks about technological obscurity and inequality.

On the topic technological obscurity, the author discussed the darkness in modern financial system, the troubles made by algorithms and the security problem caused by smart home products.

Firstly, in the era of digital finance, big companies spend millions to build faster network and trade in dark pool to hide their true objective. In this case, the market is becoming opaque to outsiders and visible to insiders.

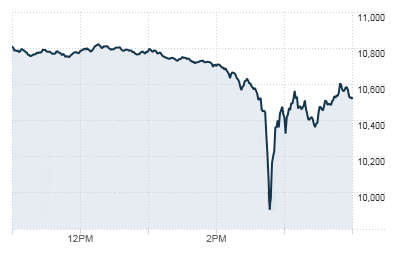

Secondly, algorithms sometimes can be dumb. High-frequency trading is a method of trading that uses powerful computer programs to transact a large number of orders in fractions of a second. It uses complex algorithms to analyze multiple markets and execute orders based on market conditions. But on 10 May 2010, Dow Jones Index fell 600 points in 5 min and recover those 600 points in 25 min. In the chaos of those twenty-five minutes, 56 billion USD changed hands and this is the famous “flash crash” event. This event is still under investigation but it is suspected that when the index dropped to a certain point, a set of algorithms were triggered to sell the stock and that’s why the index dropped rapidly in 5 min. Later, when the index dropped to another low point, another set of algorithms were triggered to buy stock automatically and the index rose gradually in 25min. Another example of obscurity in high-frequency trading happened in Oct 2016. When some negative news headlines about Brexit were released, the algorithms automatically reacted to this and sent the British pound down 6% against USD in less than 2 min. The programmers, however, didn’t know which headline did trigger the program.

Apart from high-frequency trading, algorithms also made troubles in the creative products. For instance, Amazon once sold T-shirts with offensive slogans like “keep calm and hit her”, but Amazon had no idea of it, because these slogans were auto-generated by AI and these T-shirts only existed in the database. Lastly, smart home products can be security breaches , there are cases like smart lock opened to strangers, hackers hack into the smart fridge then hack into google account linked to it. All in all, people are using opaque and poorly understood computation to manage their property as well as inserting them at the very bottom of Maslow’s hierarchy of needs –food and sleep – at the precise point, that is, where we are most vulnerable.

On another topic inequality, the author talked about how AI gradually replaced humans, how inhuman is the logic of machine and how users’data is collected.

Firstly, human is replaced by AI. For example, Amazon acquired an automatic product chain and invented chaotic storage to cut down human forces. Uber invested big money in auto car, aiming to replace all the drivers.

Secondly, there is inhuman logic in the program. In the case of Amazon storage, the workers need hand-held devices to control the robots, and these devices with sensors surveil the worker at the same time. If the workers are late from lunch or taking rest, the system docks their salary.

amazon’s hand-held device in warehouse, source:https://blog.aboutamazon.com/operations/5-things-you-dont-know-about-safety-in-amazon-warehouses

Lastly, users’data is being collected. Not only the users’ preferences on video platforms like youtube and Netflix is collected but also the machines in the hospital are collecting patients’ data. In short, the complexity of contemporary technologies is a driver of inequality, and the logic that drives technological deployment might be tainted at the source. It concentrates the power into the hands of a smaller number of people who grasp and control these technologies. The result of this wholesale investment in computational processing – of data, of goods, of people – is the elevation of efficiency above all other objectives.

In conclusion, the author held a very pessimistic view of complex computational technology. On one hand, people are inserting complex algorithms into everywhere before knowing it well. On the other hand, digitalization concentrates the power into the hand of a small group of people and leads to the “tyranny of techne”. All these above lead to inequality in society.

source:https://www.sandiegouniontribune.com/news/public-safety/story/2019-12-20/3-year-ban-on-police-use-of-facial-recognition-technology-in-california-to-start-in-the-new-year

In my opinion, the inequality caused by technology is an ethical problem. And when the ethical problem is serious, there should be law to regulate it. Take facial recognition, for example, the use of facial recognition in border protection and police surveillance are problematic areas where, without careful controls and human oversight, serious problems can arise. To address these concerns, the government of San Francisco banned the use of facial recognition by police and city agencies. Although it does reduce the risk, the opportunity cost of not using these new technologies is too high. The solution to these problems is adopting an “ethics by design” mindset and implementing these solutions in the context of a digital ethics framework. This way, we can both realize the value and reduce the ethical issues.

One thought on “ Tracy Chan on Bridal, James: New Dark Age: Chapter 5 Complexity ”