In the beginning of the chapter, the tale of US army machine recognition of tanks was used to lead a question: What can we know about a machine knows? And this is also the topic of the whole chapter.

Firstly, the question is not only a recent topic.

In the history of intelligence, the first two theories emerged are connectionism and symbolism. They debated about 40 years long about one question: what is intelligible about intelligence? The former claimed intelligence was an emergent property of the connections between neurons so machine could imitate human brain to think, while the later insisted that intelligence was products of manipulation of symbols so there is part of knowledge need reason by human.

Later, there are other thories joined the debate like neoliberalism. Finally, reigning believe is that order would emerge when human bias is absent.

The next content is about the intelligence use in daily life, they are classified regarding to the levels human know about its intelligence.

1.Encoded Biases

To prove the intelligence system, image recognition is usually the first step. Many researchers and technology companies have trials in image recoginition, but once their results were dabbling in face recogonition, they would be questioned ethical problem. Two examples : The first one is an automated system to make inferences about criminality. Its physiognomy principle has been attacked because commenters thought phrenology implied cultural biases. And the second is How-Old.net, it’s designed to suspect the age of child refugees being admitted to Britain. When criticized unethical, they all choosed to response conservatively. They said it’s just a fun app not for media assumption or something like that.

So it’s obvious that the encoded bias hindered the development of machine learning to a certain extent.

There is an assumption that these systems needn’t think about encoded bias , because the machine could not only reinforce human biases but also can detect and correct human biases.

There are another two examples about encoded bisases: Nikon cameras refused to capture an image because preprogrammed with software to wait until all its subjects were looking good. The error message write “Did someone blink?”

There is also a brand of webcam which couldn’t recognize black faces. The two companies don’t design these shortages on purpose.

They reveal that historic prejudices deeply encoded in our data sets, which are the frameworks on which we build present knowledge and decision making. Because tachnology does not emerge from a vacuum, it’s the reification of a particular set of belifs and desires.

2.Amenable to Our Understanding

And then, machine learning are not only in papers, they are already used in various areas of our daily life.

It is used in police and justice system.

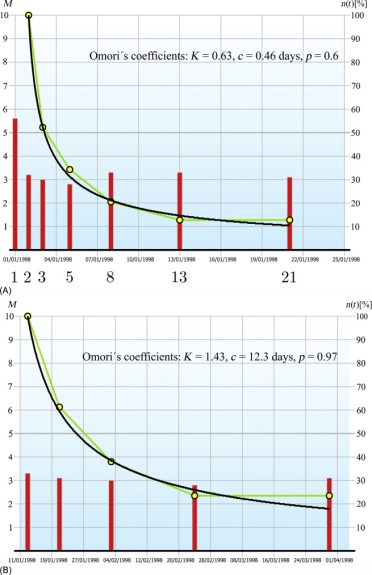

Omori’s law, described the pattern of earthquack aftershock, has nothing to do with the future attack. But a model(ETAS) based on the law is useful in crime prediction.

It’s an model amenable to our understanding. But what of the new models of thought produced by machines making decisions and consequences that we don’t understand.

3.Unseeable Space

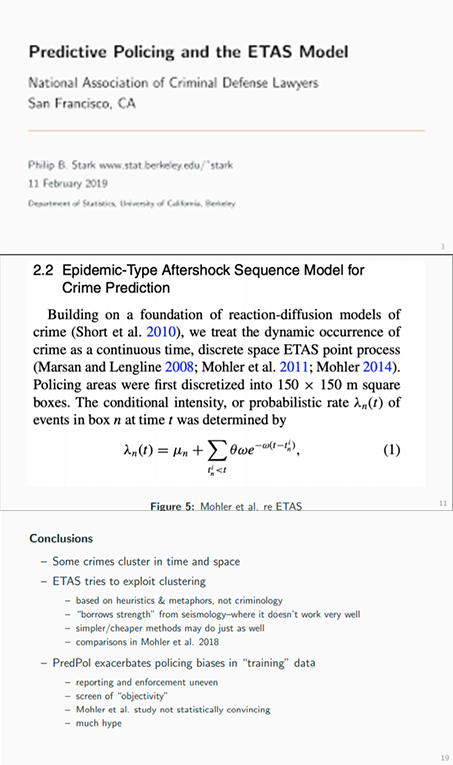

The Google Translate software example could explain the two kinds of models. Firtly, the translate software used statistical language inference, in 2016 ,it changed to employ a neural network developed by Google Brain. The network built a map of the entire territory instead of a two-dimensional connections between words. This is the unseeable space in which machine learning makes it meaning. Beyond this is that we are incapable of even understand.

4.Infinite Fun Space

In the area of chess and go, Deep Blue defeated the reigning world chess champion, and Alpha Go defeated the Korean Go professional Lee Sedol, the machine’s moves which is not like a move made by human is beyong imagination. And the place was called Infinite Fun Space in Iain M Banks’s novel.

5.Unknowingness

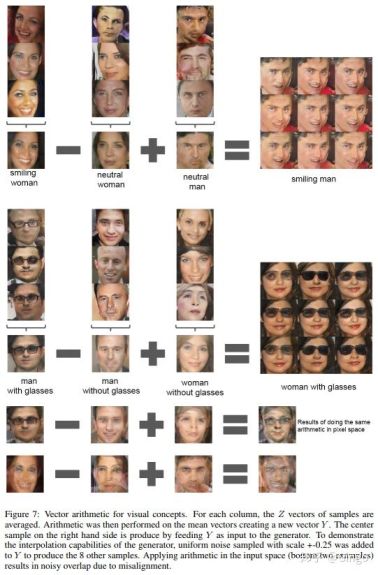

Furthermore, some machine intelligence don’t stay within Infinite Fun Space, they created an unknowingness in the world. By creating new images of people or rooms using dataset of millions of photographs machine starts to interleave with our own memories and to change the history. Like the picture on the left side, a set of smiling women, unsmiling women and unsmiling men can be computed to produce entirely new image of smiling men. We become confused: Who are these people? What are they smiling at? where are the places? Have I took that photo ever?

6. Unconscious

And what the machine could learn are not only human behavious but also our unconscious.

A programmem called DeepDream was designed to better illustrate the internal workingss of inscruble neural networks. The normal sequence of network is input millions of images at the beginning, but the machine reverse the sequence: input an image it in the end of the network and analysis what does the network want to see. One constant that recurs throughout Deepdream’s creation is the images of eyes, and what it revealed is the Google own unconscious, composed of our memories and actions, processed by constant analysis and tracked for corporate profit and private intelligence.

Finally, the dominance of machine is getting out of human understand. The responsibility of researchers is to figure out what machine know as much as possible. The criticism and anxiety is not necessary.

Machine intelligence could be positively treated. In the chess game, human and machine play against a solo machine is found the best way to contest because it draws on the respective skills of humans and machines as required, rather than pitting one against the other. Through cooperation with machine, we could have a deeper recognise of how machine make a decision. And cooperation need not to be limited to machine: with all the other nonhuman entities. And this form a new model of work, looking for universal justice at present.